Yuta Oshima

I’m a PhD student at The University of Tokyo, mentored by Professor Yutaka Matsuo.

My research goal is to develop interactive image and video generation models, letting anyone create and shape visual worlds with intuitive, flexible, and precise control.

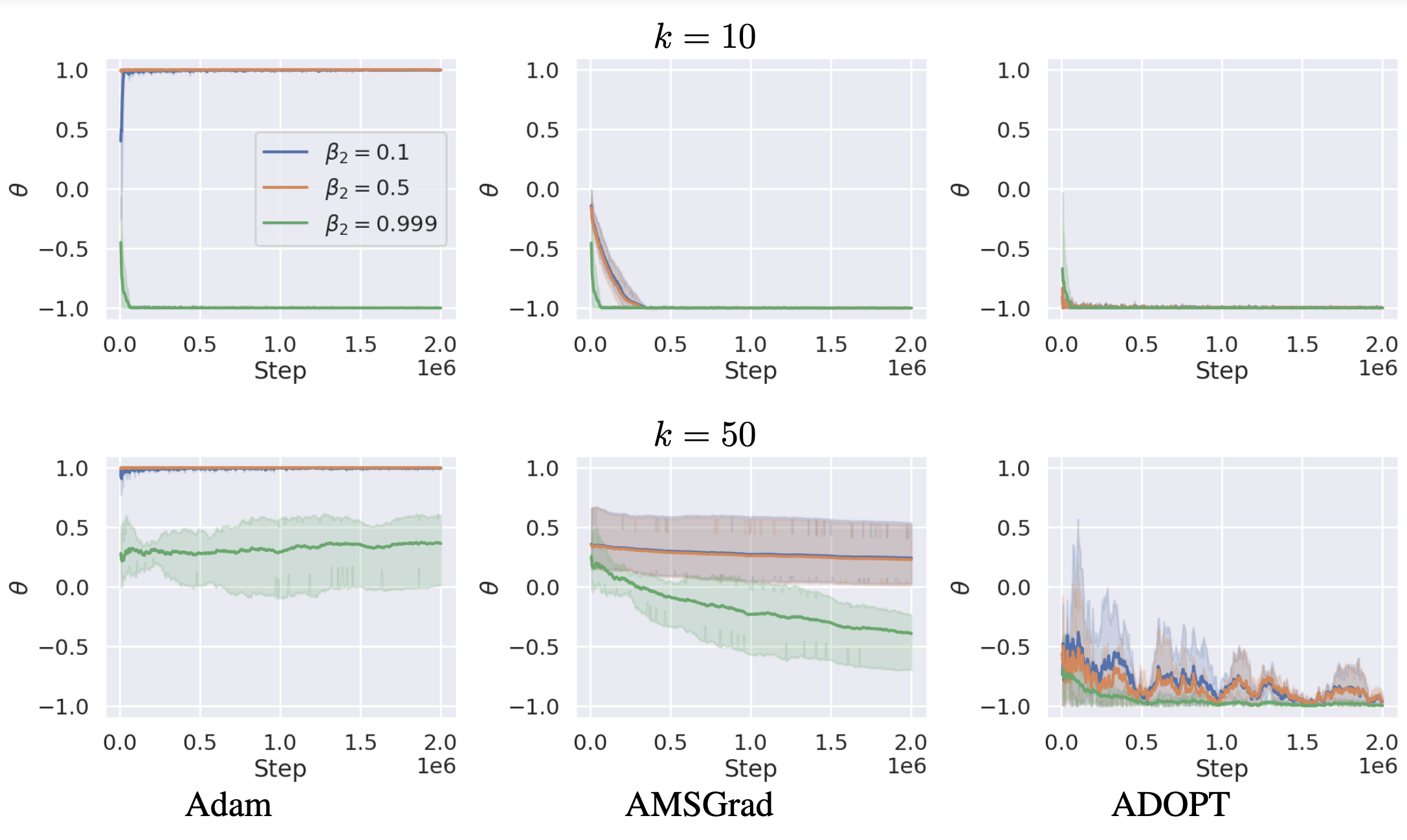

Toward this goal, I currently focus on improving the controllability of vision foundation models such as diffusion models, through alignment and instruction-following to enable fine-grained visual generation.

selected publications

- CVPR 2026 Main

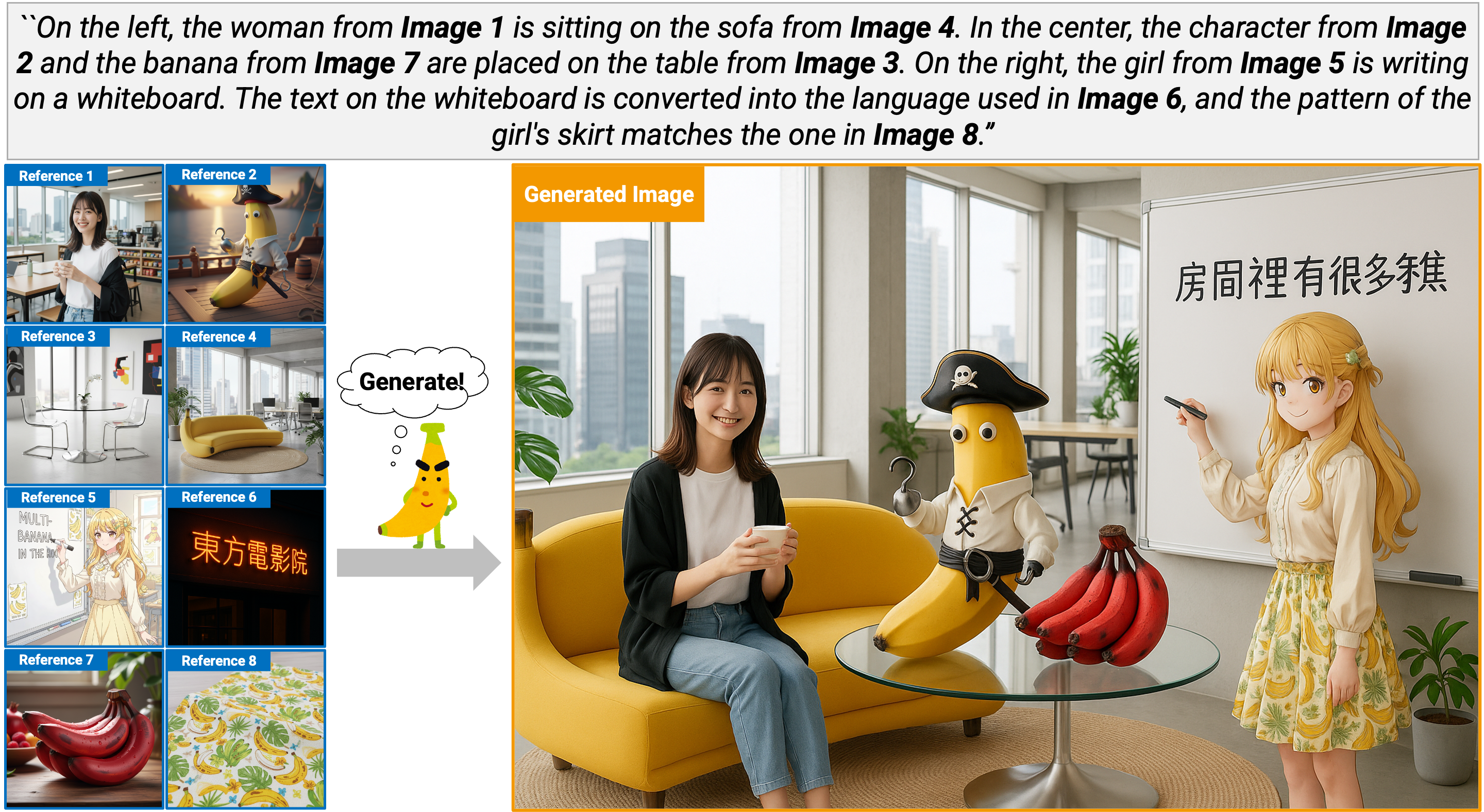

MultiBanana: A Challenging Benchmark for Multi-Reference Text-to-Image GenerationIn the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2026

MultiBanana: A Challenging Benchmark for Multi-Reference Text-to-Image GenerationIn the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2026